|

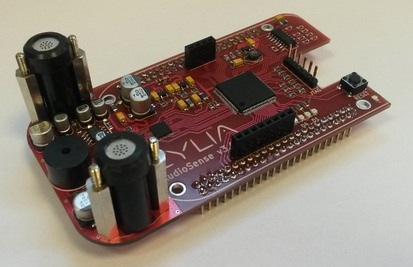

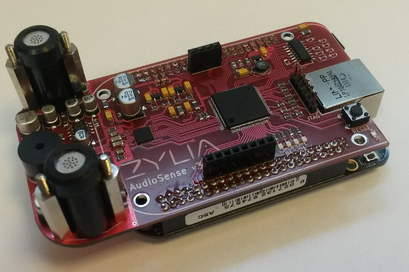

The purpose of developed 3D AudioSense Cape is to record surrounding audio synchronously along with other capes. Each cape, along with its BeagleBone host, becomes an audio sensor. Multiple sensors forms a network which effectively creates a wireless distributed microphone array. Recorded audio stream is transmitted over WiFi connection while the synchronization is provided by a separate low-latency radio channel. A single 3D AudioSense Cape consists of following components:

What the 3D AudioSense Cape allows to do

Wireless synchronizationWhat makes the 3D AudioSense Cape different from a typical audio cape is an ability of wireless synchronization with a master reference clock signal. The synchronization allows multiple capes to capture the sound field synchronously with no more than a single sample phase deviation. This unique feature makes recorded sound possible to be processed by algorithms which allow sound source separation, localization and so on. The synchronization signal along with recording control information is transmitted wirelessly from a single master device over a dedicated ISM band low-latency radio channel. A dedicated master device is responsible for an appropriate clock signal generation for the whole sensor network. Control commands are also sent by it. This allows very high flexibility of microphone array arrangement. In order for the captured audio data to be useful, precise position of all microphones need to be known. 3D AudioSense Cape is equipped with a buzzer that allows it to send audio pulses. These pulses allows other capes to localize it by measuring sound propagation time. Precise localization is possible thanks to the wireless synchronization of all capes. Detailed hardware descriptionThe microphone preamplifier was specially designed for operation in a presence of electrical noise generated by digital circuitry. Both analog and digital components share the same power source which turned out to be a significant noise generator for the weak microphone audio signal. Both low and high frequency noise filters were applied to the power of the analog part of the cape. A special care was taken to the PCB layout in order to separate analog and digital parts and signal tracks from each other. The result is high quality audio signal free from any unwanted digital signal interference.

Analog signals from microphone preamplifiers are connected to line inputs of the audio converter chip. The analog input of the A/D converter provides analog programmable gain amplifiers (PGA) which can be used to adjust signal levels just before digitalization. The same audio chip contains D/A converter with built-in headphone amplifier. Output of the amplifier is connected directly to a small speaker. The speaker is a 2kHz resonant buzzer which task is to transmit short audio pulses of that frequency. The connection of the buzzer to the D/A converter allows to precisely control shape of transmitted waveform. The radio module operates in ISM frequency band, therefore no radio licensing is required. A special care was taken to the radio module selection. Due to necessity of reference clock transmission, the radio module cannot perform any channel coding and data encapsulation in packets. This ensures low-latency signal propagation from the master transmitter device to each cape. The drawback of such solution is higher susceptibility to interference. Fortunately appropriate FPGA modules togeather with analog PLL device are there to eliminate any impairments to synchronization clock and data transmission. The FPGA chip is what binds everything together. The use of an FPGA is necessary due to presence of external wireless synchronization. FPGAs are an excellent choice when it comes to time critical signal processing. The key task of the FPGA device is decoding of incoming synchronization signal by separating audio clock from control data stream. Timestamps, received through the wireless channel, are then embedded into audio stream that comes from the A/D converter. The resulting data stream is then sent to the host BeagleBone board via SPI interface. We are proud to announce that 3D AudioSense was awarded in the competition Polish Product of the Future. The competition’s objective is to promote and disseminate information on achievements of innovative techniques and technologies which have the opportunity to be applied on the Polish market. The competition is intended for innovative enterprises, research and development units, scientific institutes, research centres and also for individual inventors from EU Member States. The award ceremony took place on the 1st of December 2014 in Warsaw. The ceremony was attended by Vice-Minister and Minister of Economy – Janusz Piechociński and the Chairperson of the Polish Agency for Enterprise Development – Bożena Lubańska-Kasprzak.

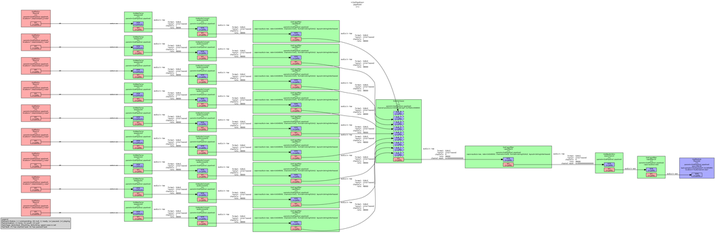

WAV is one of the most popular audio format in use. The bit stream structure of a .wav file consist of a fixed-size header (44 bytes) and a payload containing PCM samples. Crucial parameters like sample rate, duration of a recording, bit depth, number of channels, compression code or bit stream are kept in wav header. Payload section carries only sound samples for each channel in interleaved or non-interleaved order. Gstreamer is fully compatible with mono, stereo or multichannel streaming. Unfortunately, wavenc, standard element does not support encoding more than two channels. This functionality is desired in audio system based on multichannel recording. 3DAudioSense belongs to this group, so we solved this issue by implementing additional wavnchenc element, thus we enabled gstreamer to produce multichannel wave files. Imagine situation where application operates on samples from 9 independent sound sources. The interleave element produces stream of raw output data as a combination of interlaced frames. Wavnchenc constructs the wav header from data gathered from the input element and pushes it to the front of streaming buffers. Finally, the output file can be played back e.g. in Audacity. Exemplary pipeline is presented below: gst-launch interleave name=il \ It is possible to test our implementation of wavnchenc plugin. Just download it from our git site and follow the installation commands. Our official github side is[Zylia-RnD].

Advertisement: BeagleBone Black - Building Kernel and deploying it with new system distribution/Flashing onboard eMMC from microSD card

cd beaglebone sudo git clone git://github.com/RobertCNelson/linux-dev.git cd linux-dev# checkout v3.8 branch sudo git checkout origin/am33x-v3.8 -b tmp cd ..

sudo nano system.sh

CC=<your path to arm-linux-gnueabi- files>//usually CC=/usr/bin/arm-linux-gnueabihf- #LINUX KERNEL GIT: LINUX_GIT=<your path to linux-stable directory>#LINUX KERNEL START ADDRESS #FOR TI:OMAP3/4/AM35xx ZRELADDR=0x80008000#MMC device name MMC=/dev/sdX //X is the letter for your SD card

Build kernelPrepare environment on your host linux machine

Deploying kernel to the existing system installation in SD cardDeploying kernel to the new system installation in microSD cardAll above instructions are based on information from the following links:

sudo tar -xf debian-7.5-console-armhf-2014-05-06.tar.xz cd debian-7.5-console-armhf-2014-05-06 sudo ./setup_sdcard.sh --mmc /dev/sdb --uboot bone

Using MicroSD as an extra storage deviceThere are three ways to make your BBB boot from onboard eMMC memory:

cd tools/scripts/

bootpart=1:2 mmcroot=/dev/mmcblk1p2 ro optargs=quiet

Flashing onboard eMMC form microSD card

3DAudioSense was one of five awarded half-finalist projects in last month’s competition for startup projects – Zacznij.biz – read more. Zacznij.biz is a contest for teams aiming to present their early-stage projects in front of larger audience including potential investors. It is organized by Lewiatan Confederation and Lewiatan Business Angels.

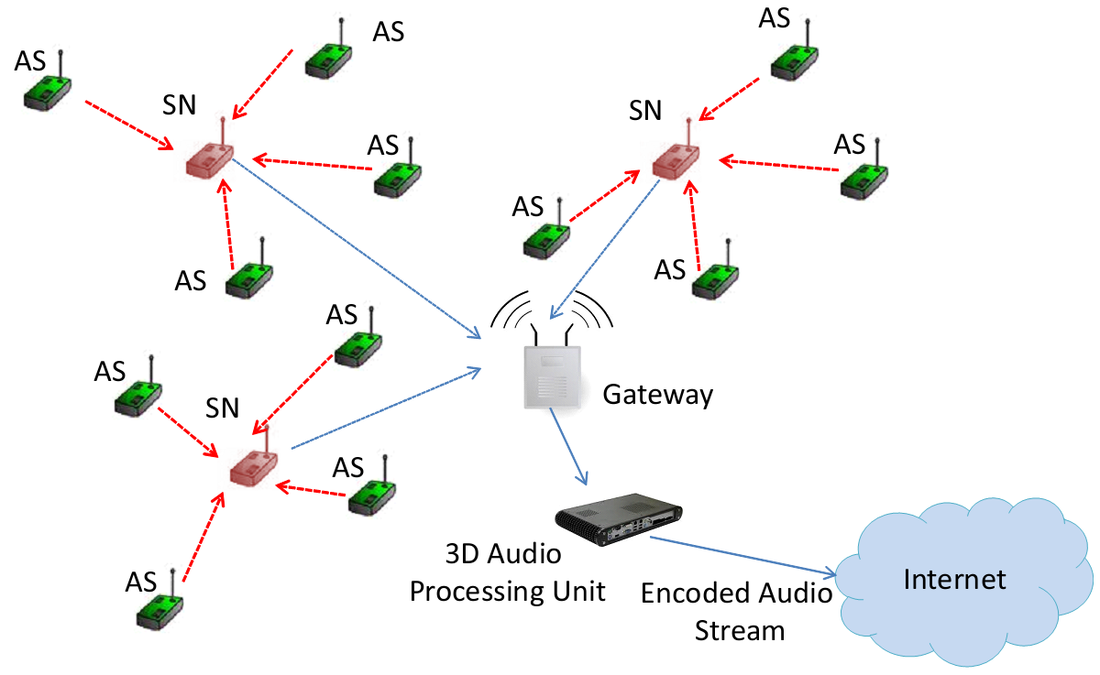

The grand jury of the contest consisted of business experts, business angels and investors who are often making decisions on investments on potentially successful projects that they later support. Out of 104 contestant projects, 3DAudioSense made it up to the half final - best 8, which entitled the team to present the project at a stand at Grand Finale Gala on 4th March 2014 in Warsaw. The competition itself started in September 2013 and was divided into several stages which included both preparation/practice and evaluation sessions. The last evaluation was done based on presentation made in front of Grand Jury, which as a result made a decision to honor 3DAudioSesne with an award. During the Grand Finale Gala the team had a chance to present project's details to business angels, investors and technical people. The project got very positive reception, which lead to further discussions about potential funding from VCs. Many valuable guests gave their opinions and advices on how to improve the project from the business perspective. It has been a valuable lesson for the team that is even more certain about project's value from both technical and business standpoints. AudioSense is a Wireless Acoustic Sensor Network (WASN) which can be used for real time spatial audio recording. The main goal in this approach is object based audio representation, ie. signals which represent individual sound sources. Individual sound sources are extracted from a sound mixture using specific sound source separation algorithms, such as Independent Component Analysis. Sound object representation gives great flexibility in audio scene reconstruction in terms of loudspeakers and headphones setups. One of the finest features introduced by this kind of representation is a possibility of interactive manipulation of sound objects at the receiver/user side. System requirements

AudioSense architectureThe system consists of the WASN part and the sound processing part. WASN is a heterogeneous network with two classes of devices:

Aggregated streams are forwarded to the nearest Gateway which serves as an interface between the WASN and the 3D Audio Processing Unit. 3D Audio Processing Unit performs:

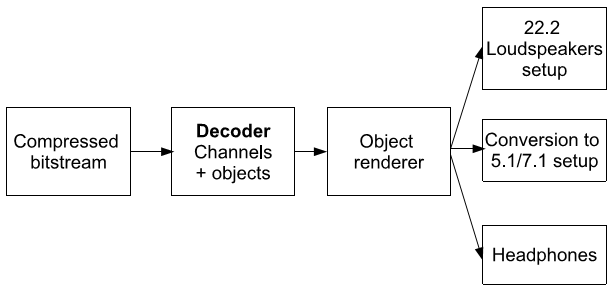

Total bit rate of MPEG-H 3D Audio varies from 256 up to 1200 kbps for 22.2 channel material. Interesting feature provided by MPEG-H 3D Audio is the ability to decode and render spatial audio for different loudspeakers setups and headphones as it has been shown below Hardware implementation Acoustic Sensor

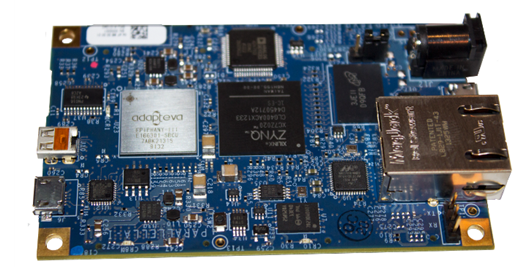

BeagleBone Black, AudioCape and Microphone array (BBB extension). 3D Audio Processing Unit

This energy efficient single board computer is based on Xilinx Zynq 7000 SoC (system on chip) module supported with Epiphany III mulitcore accelerator. The Epiphany chip contains 16 high performance RISC CPU cores, where each of them can operate at 1GHz and 2 GFLOPS/sec. This high computing power computer consumes only up to 5 Watts which makes it very convenient to use in a WASN system like AudioSense.

Let’s imagine a situation where you can capture a three dimensional sound in an easy way - just using small audio sensors. Then, you will be able to reproduce immersive sound on any loadspeakers or headphones.

3D AudioSense is a research project which focuses on recording and delivery of 3D audio recorded using distributed wireless sensor network. It is mainly split between two activities:

3D audio is a really excited area for research as well as part of growing market for new products. Therefore, we are really happy to be able to make our contribution in this topic. Check back here often or subscribe to our feed (either twitter or RSS) to keep up to date with our work. |

Author3D AudioSense is a research project which focuses on the capturing of spatial audio scene using a distributed wireless sensor network. Archives

March 2015

Categories |

||||||

RSS Feed

RSS Feed